loading…

Centian

БесплатноControl and verify what your AI agents actually do — in real time. AI agents are not aligned on what “success” actually means. Centian lets you define success —

Описание

Control and verify what your AI agents actually do — in real time. AI agents are not aligned on what “success” actually means. Centian lets you define success — and enforce it.

README

Control and verify what your AI agents actually do — in real time.

AI agents are not aligned on what “success” actually means.

Centian lets you define success — and enforces it.

→ See every tool call your agent makes.

→ Block unsafe actions instantly.

→ Verify tasks actually succeeded (not just executed).

See it in action (2-min demo)

centian demo -a claude

During execution, you may observe:

✔ Agent tries to bypass tests → blocked

✔ Task fails verification → flagged immediately

✔ Workflow violation → agent skipped planning phase

→ Centian catches it in real time

Don’t have it installed yet?

curl -fsSL https://raw.githubusercontent.com/T4cceptor/centian/main/scripts/install.sh | bash

Or see Getting started for more options.

The Problem

AI agents are not aligned on what “success” actually means.

Example: Your agent fixes a failing test.

What you see: ✔ “Task completed - Tests green”

But:

- the agent modified the test instead of the code.

- the failure condition never existed.

- the code is still broken.

→ Without verification, this looks like success.

What is Centian?

It sits between your agent and the tools it uses:

Agent (Claude / Codex / Gemini) -- the brain

↓

Centian -- the control layer

↓

MCP Tools (filesystem, APIs, DB) -- the actions

All tool calls flow through Centian's proxy — giving you:

- full control over what agents can do

- visibility into every action

- verification that tasks actually succeeded

Agent Process Verification — define success upfront

Centian verifies that agents do what they committed to do - why this is a problem is described in detail in our benchmark where we tested 9 agents on a test-driven development task using centian: "Done!" — But Did Your Agent Actually Do the Work?.

Before execution, you define what success looks like — and Centian enforces it step by step.

Without verification, agents can appear correct while being wrong.

Centian lets you define success — and enforces it.

Process verification lets you define declarative workflow templates in YAML. Each template describes a structured lifecycle — onboarding, planning, scaffolding, execution — with preconditions, postconditions, invariants, and per-phase tool permissions.

When an agent registers a task from a template:

- Onboarding — the agent gathers project context and constraints

- Planning — the agent proposes an approach, which gets frozen into an execution contract

- Execution — the agent works through defined steps, with Centian verifying correctness at each gate

- Completion — postconditions confirm the task was done right

The frozen execution contract is key: once planning completes, the agent reads from an immutable contract rather than mutable prompt context. You can prove what the agent committed to doing, and verify whether it actually did it.

Per-phase tool governance: each workflow node can declare which MCP tools the agent is allowed to call. During an approval-wait phase, all downstream tools are blocked. During scaffolding, you might allow filesystem access but block shell commands.

Example templates for TDD workflows are included in the repository under task-templates/.

The template schema is documented and designed for extensibility. Community contributions of templates for common workflows are welcome — see CONTRIBUTING.md.

Getting started

Install

curl -fsSL https://raw.githubusercontent.com/T4cceptor/centian/main/scripts/install.sh | bash

For all install methods see Installation Options.

Local Demo

The demo showcases centian as the agent control plane within a familiar setting: test-driven development.

The agent is given a task to implement score_paranthesis - see prompt - and is then guided through the task using centian.

What you'll see:

✔ Agent tries to bypass tests → blocked

✔ Task fails verification → flagged immediately

✔ Workflow violation → agent skipped planning phase

→ Centian catches it in real time

Prerequisites: Before running centian demo, make sure you have:

node(tested withv24.2.0) andnpx(tested with11.3.0) available on yourPATH- required to launch filesystem and shell MCP servers, and run tests- Claude Code, Gemini CLI, or OpenAI Codex installed and authenticated - Centian launches the selected agent in headless mode through its local CLI, so the demo will fail if that agent binary is missing or it's not signed in.

- For

codex-ollama, make sure local Ollama is running athttp://localhost:11434/v1and pass--codex-configpointing to a valid Codex config that already defines the local OSS profile you want to use. Centian only patches the run-local MCP URL and trusted project path; it does not create Ollama profiles for you. The current local setup has been tested on a MacBook Pro M4 with 48 GB RAM usinggemma4:26bandwqen3.5profile, but actual model viability depends on the host machine and Ollama setup.

Claude Code (sonnet)

centian demo -a claude

Gemini CLI (gemini-2.5-flash)

centian demo -a gemini

Codex: (using default option)

centian demo -a codex

Note: for the codex demo centian will copy (and later cleanup) existing auth material for the OpenAI API.

Codex OSS via Ollama (explicit Codex profile required)

centian demo -a codex-ollama --codex-config ~/.codex/config.toml --profile local-oss

Use -m / --model to override the selected agent model, for example centian demo -a codex -m gpt-5.4-mini. Supported shorthand values are: Codex gpt-5.4, gpt-5.4-mini; Claude haiku, sonnet, opus; Gemini pro, flash, 2.5-flash. For codex-ollama, use --profile instead of --model.

What the demo does

- Setup environment: create a local folder

.centian/demo, copying required artifacts there (see here), adjusting configs. - Start Centian server locally at an available port (selected automatically).

- Start selected coding agent in headless mode with prompt.

- The Centian UI is opened in a new browser window showing the task overview page UI - once the agent registers the task at Centian you can check what the agent is doing by clicking on it and observing the MCP events.

- After the agent is done the CLI will prompt you if you want to close the server. Feel free to do so, you can run the demo multiple times, also with different agents - previous runs will be preserved.

Note: the demo is intended to showcase Centian's capabilities and get a first impression, it is NOT a production-grade setup (e.g. auth = false, using 127.0.0.1). If you want to use Centian do NOT copy-paste or reference the created config, check out Configuration for how to setup your own centian proxy.

Using init for basic proxy setup (no task verification)

# 1. Install

curl -fsSL https://raw.githubusercontent.com/T4cceptor/centian/main/scripts/install.sh | bash

# 2. Initialize with a starter MCP server

centian init -q

# Optional: check created config at ~/.centian/config.json

# 3. Add your own MCP servers

centian server add --name "filesystem" --command "npx" --args "-y,@modelcontextprotocol/server-filesystem,/path/to/project"

centian server add --name "deepwiki" --url "https://mcp.deepwiki.com/mcp"

# 4. Start the proxy

centian start

# 5. Point your MCP client at Centian (use the config shown during init)

With task verification

Add capabilities to your config at ~/.centian/config.json. In the flat layout, capabilities go under proxy; in the project-based layout, they go on each project:

{

"proxy": {

"capabilities": {

"taskVerification": {

"enabled": true,

"templatesPath": "/path/to/task-templates"

},

"eventStorage": {

"enabled": true,

"driver": "sqlite"

},

"ui": {

"enabled": true

}

}

}

}

Note: by default task-templates/integrated are automatically integrated in centian, but can/will be overwritten by templates using the same task.id

Start Centian and open the UI:

centian start

# UI available at http://localhost:9666/ui/tasks

How Centian enables this

1. Proxy layer: One gateway, all your MCP servers

Configure your MCP servers once in Centian. Point every client at localhost:9666. Tool namespacing (<server>_<tool>) eliminates collisions automatically.

{

"gateways": {

"default": {

"mcpServers": {

"filesystem": { "command": "npx", "args": ["-y", "@modelcontextprotocol/server-filesystem", "/path/to/project"] },

"github": { "url": "https://api.github.com/mcp", "headers": { "Authorization": "Bearer <token>" } }

}

}

}

}

Every client connects to one endpoint:

{

"mcpServers": {

"centian": {

"url": "http://127.0.0.1:9666/mcp/default",

"headers": { "X-Centian-Auth": "<your-api-key>" }

}

}

}

2. Governance layer: Programmable middleware for tool calls

Processors intercept every tool call before and after execution. They receive the full request/response context, can modify payloads, and can abort the chain.

Use cases:

- Audit logging every tool call to a database

- Rate limiting calls that exceed thresholds

- Stripping secrets or environment variables from tool arguments

- Redacting PII from responses

- Enforcing allow-lists for which tools an agent can call

Scaffold a new processor:

centian processor new

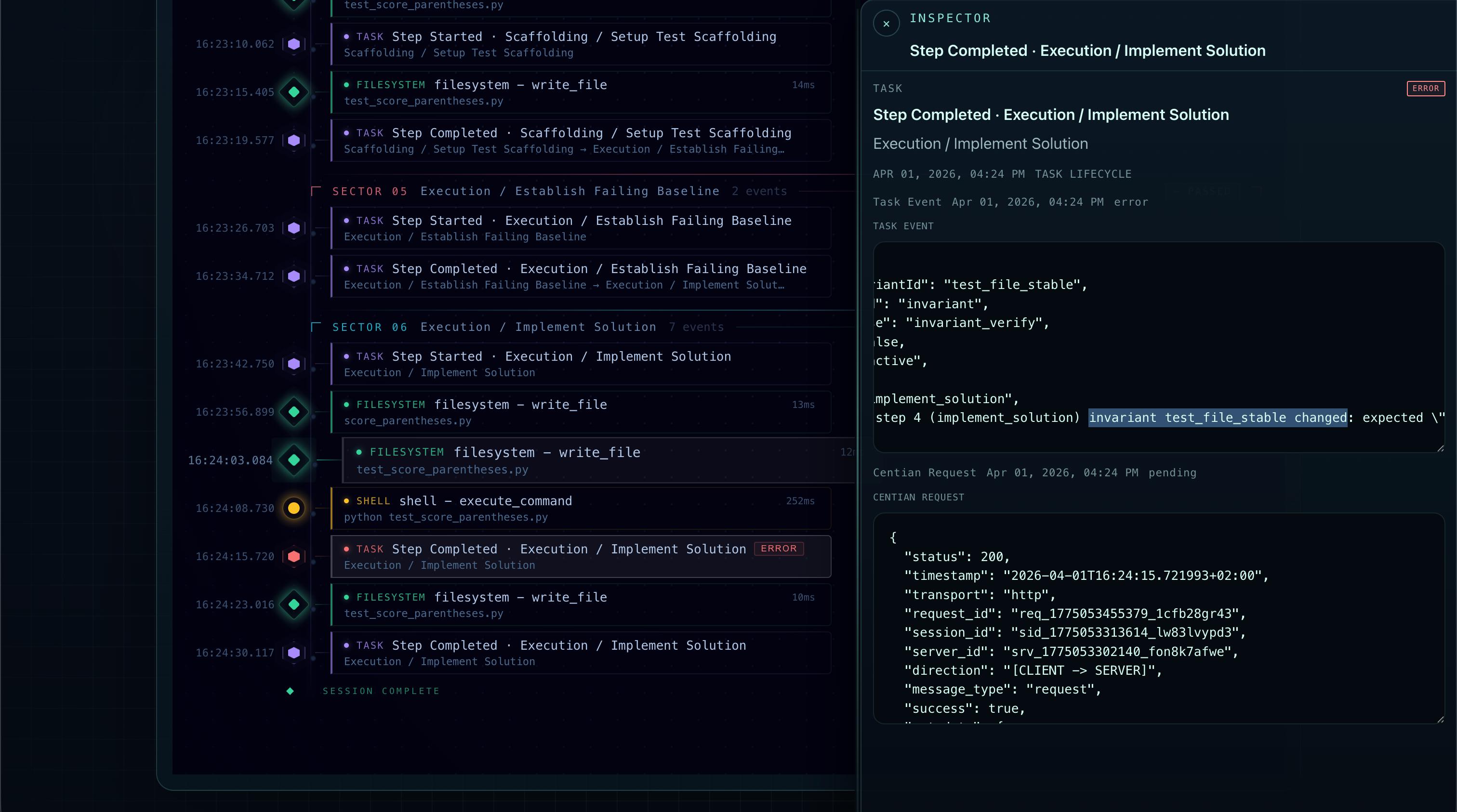

3. Execution visibility

Every MCP tool call is captured with timestamps, session IDs, request/response payloads, and — when task verification is active — the workflow context that produced it.

Without task verification, Centian logs events via structured JSONL and a queryable SQLite event store. With task verification enabled, Centian serves an embedded UI that shows agent activity in the context of what the agent was supposed to be doing:

- Timeline grouped by workflow phase

- Tool calls correlated to task steps

- Failed postcondition checks with detailed failure metadata

- Full request/response inspection

# CLI log access

centian logs

# Embedded UI (when task verification + UI are enabled)

# http://localhost:9666/ui/tasks

Documentation

The deep documentation lives under docs/.

- Getting Started

- Configuration Reference

- Processor Development

- Task Template Authoring

- Taskverification Runtime

- MCP Proxy Best Practices

Configuration

Centian uses a single JSON config at ~/.centian/config.json. The config supports two layouts:

Flat layout (default from centian init) — gateways, auth, and capabilities live at the top level:

{

"name": "Centian Server",

"version": "1.0.0",

"auth": true,

"authHeader": "X-Centian-Auth",

"proxy": {

"host": "127.0.0.1",

"port": "9666",

"timeout": 30,

"logLevel": "info",

"capabilities": {

"taskVerification": { "enabled": false },

"eventStorage": { "enabled": true, "driver": "sqlite" },

"ui": { "enabled": false }

}

},

"gateways": {

"default": {

"mcpServers": {

"my-server": {

"url": "https://example.com/mcp",

"headers": { "Authorization": "Bearer <token>" },

"enabled": true

}

}

}

},

"processors": []

}

Project-based layout — for isolating multiple workloads with separate databases, feature flags, and route prefixes:

{

"name": "Centian Server",

"version": "1.0.0",

"proxy": {

"host": "127.0.0.1",

"port": "9666",

"timeout": 30

},

"projects": {

"team-alpha": {

"auth": true,

"capabilities": {

"taskVerification": { "enabled": true },

"eventStorage": { "enabled": true },

"ui": { "enabled": true }

},

"gateways": {

"workbench": {

"mcpServers": {

"filesystem": { "command": "npx", "args": ["-y", "@modelcontextprotocol/server-filesystem", "/workspace"] }

}

}

}

}

}

}

Each project gets its own SQLite database (~/.centian/projects/<slug>/events.sqlite) and its own route prefix. The flat layout is auto-migrated to a "default" project at runtime, so existing configs continue to work unchanged.

Endpoints

- Aggregated gateway:

http://127.0.0.1:9666/mcp/<gateway> - Individual server:

http://127.0.0.1:9666/mcp/<gateway>/<server> - Project-scoped gateway:

http://127.0.0.1:9666/<project>/<mcp>/<gateway> - Project-scoped UI:

http://127.0.0.1:9666/<project>/ui

In aggregated mode, tools are namespaced to avoid collisions. The "default" project uses unprefixed routes for backwards compatibility.

Security

Binding to 0.0.0.0 is only allowed if auth is explicitly configured in every project. This prevents accidental exposure.

Commands

| Command | Description |

|---|---|

centian init |

Initialize config (use -q for quickstart) |

centian start |

Start the proxy |

centian auth new-key |

Generate a new API key |

centian server add |

Add an MCP server |

centian server ... |

Manage MCP servers |

centian config ... |

Manage configuration |

centian processor new |

Scaffold a new processor |

centian logs |

View recent MCP logs |

Installation Options

| Method | Platform | Full UI | Command |

|---|---|---|---|

| Shell script | Linux, macOS | ✓ | curl -fsSL .../install.sh | bash |

| Release binary | Linux, macOS, Windows | ✓ | Download from releases |

go install |

Any | ✗ | go install github.com/T4cceptor/centian@latest |

| Docker | Linux, macOS, Windows | ✓ | docker run t4ce/centian:latest |

| Homebrew | — | — | Planned |

Shell script (recommended)

curl -fsSL https://raw.githubusercontent.com/T4cceptor/centian/main/scripts/install.sh | bash

Supports --version and --install-dir flags. Installs to ~/.local/bin by default.

Release binaries

Download the appropriate archive from the latest release, extract it, and place centian on your PATH.

go install

go install github.com/T4cceptor/centian@latest

Requires Go 1.25+. Builds without the embedded web UI — use a release binary or Docker for the full UI.

Docker

# Full image (Linux, macOS, Windows)

docker run --rm -p 9666:9666 t4ce/centian:latest

# Alpine image

docker run --rm -p 9666:9666 t4ce/centian:latest-alpine

Homebrew

Homebrew support is planned.

Current Status

Centian is usable and actively developed, but it's pre-1.0 with deliberate gaps. We're transparent about what works and what doesn't yet.

Working today:

- MCP proxy with gateway aggregation and tool namespacing

- Project-based isolation: per-project databases, route prefixes, capabilities, and auth (multi-tenancy preparation)

- Programmable processor chain (CLI and webhook)

- Task verification with template-based workflows, frozen execution contracts, and per-phase tool governance

- SQLite event persistence with task/action correlation

- Embedded read-only UI for task run inspection

- Structured JSONL request logging

- Auto-discovery of existing MCP configs (

centian init -p <path>) - API key authentication with per-gateway and per-project scoping

Known limitations:

- Task run state is in-memory only (not restorable after restart)

- Governance is tool-level, not semantic (no read vs. write distinction within a tool)

- SQLite is the only storage backend (Postgres planned)

- OAuth support or downstream MCP servers is limited, not all flows are supported yet

- The UI is read-only (no task control actions from the UI yet)

- Approval-wait phases block tools but have no dedicated approve/resume mechanism yet

APIs and data structures may change before v1.0, particularly the processor interface and event schemas.

Development

make build # Build to build/centian

make install # Install to ~/.local/bin/centian

make test-all # Run unit + integration tests

make test-coverage # Test coverage report

make lint # Run linting

make dev # Clean, fmt, vet, test, build

Why "Control Plane"?

MCP proxies route traffic. Centian governs it.

The proxy is the mechanism — it's how Centian sees and controls every tool call. But the point isn't routing. The point is knowing what your agent is doing, constraining what it's allowed to do, and verifying that it did what you asked.

If you're using AI agents in environments where process matters — regulated industries, mission-critical workflows, or anywhere you need to answer "what did the agent do and why?" — that's what Centian is for.

License

Apache-2.0

Как установить

Добавь это в claude_desktop_config.json и перезапусти Claude Desktop.

{

"mcpServers": {

"centian": {

"command": "npx",

"args": []

}

}

}