loading…

Mnemostack

FreeDurable hybrid memory for AI agents. Combines vector search, BM25, temporal retrieval, and optional Memgraph knowledge graph via reciprocal rank fusion. 6 MCP t

About

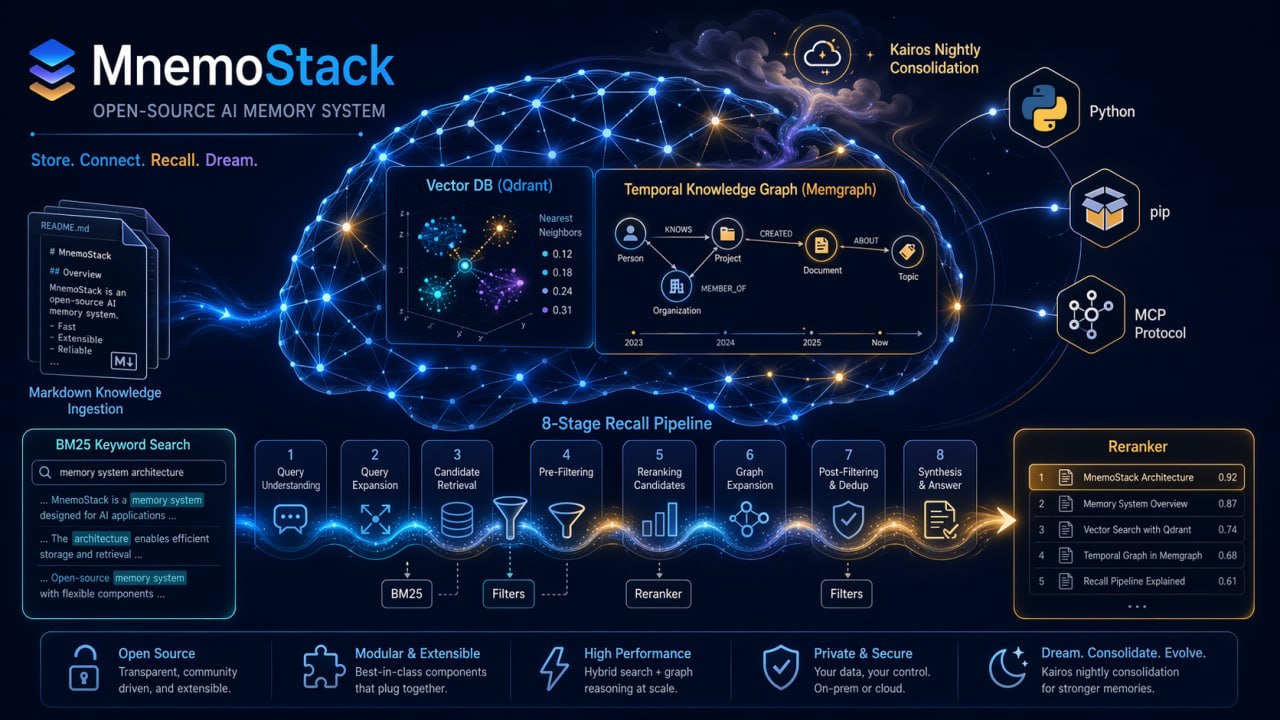

Durable hybrid memory for AI agents. Combines vector search, BM25, temporal retrieval, and optional Memgraph knowledge graph via reciprocal rank fusion. 6 MCP tools: health, search, answer, feedback, graph_query, graph_add_triple. Self-hosted with Qdrant backend.

README

PyPI Python versions CI License: Apache 2.0

Durable, searchable memory for AI agents.

Mnemostack was built to solve a real problem: AI agents forget useful context between sessions.

Every long-running agent eventually hits the same wall: context gets compacted, sessions restart, useful decisions disappear, and the next run pays the re-orientation tax again.

mnemostack gives agents a persistent memory layer they can query when the current context window is not enough. It is designed for agent workflows where memory needs to be durable, searchable, scoped, and explainable — not just embedded and hoped for.

Under the hood, Mnemostack uses hybrid retrieval across semantic search, keyword search, and graph relationships, then ranks results through a unified recall pipeline.

Status: Actively developed — public API is stable; new functionality lands additively in minor releases. Breaking changes are rare and called out in CHANGELOG.md.

MCP quickstart

This is the main entrypoint if you want to give Claude Desktop, Claude Code, Cursor, ChatGPT, or another MCP-capable agent durable memory.

1. Install

pip install 'mnemostack[mcp]'

Run a local Qdrant for the vector store:

docker run -p 6333:6333 qdrant/qdrant:latest

Optional: run Memgraph for graph-backed memory:

docker run -p 7687:7687 memgraph/memgraph:latest

2. Start the MCP server

export GEMINI_API_KEY=your-key-here

mnemostack mcp-serve --provider gemini --collection my-memory

Claude Desktop config example:

{

"mcpServers": {

"mnemostack": {

"command": "mnemostack",

"args": ["mcp-serve", "--provider", "gemini", "--collection", "my-memory"],

"env": {

"GEMINI_API_KEY": "your-key-here"

}

}

}

}

Claude will then be able to call mnemostack_search, mnemostack_answer, and graph tools.

3. Store memory

Index a folder of notes, docs, transcripts, or project context:

mnemostack index ./my-notes/ --provider gemini --collection my-memory --recreate

For a running app or assistant, use the streaming Ingestor API shown below to store messages as they arrive.

4. Recall memory

From an agent, ask a memory-style question and let the MCP tools retrieve the right facts.

From the shell, test the same collection directly:

mnemostack search "what did we decide about auth" --provider gemini --collection my-memory

mnemostack answer "what did we decide about auth" --provider gemini --collection my-memory

Why hybrid memory?

Vector search answers "what sounds similar?" Agent memory needs to answer "what matters in this context?" That requires exact matches, semantic similarity, relationship tracking, recency, user/project scope, and feedback from past recalls. Mnemostack uses hybrid retrieval so memory recall is reliable instead of embedding roulette.

Use cases

- Long-running coding agents that need to survive compaction and session restarts.

- Personal assistant memory for preferences, decisions, recurring tasks, and long-horizon context.

- Team or project knowledge recall across docs, notes, tickets, and chat history.

- Multi-agent context sharing through one durable memory backend.

- Session compaction recovery when the useful details no longer fit in the prompt.

Three ways to use Mnemostack

- MCP server — for agent users. Start

mnemostack mcp-serve, connect your agent, and use memory tools from the chat/runtime you already use. - HTTP API — for app developers. Run

mnemostack serveand call/recall,/answer,/feedback,/health,/metrics, or/docsfrom any language. - Python SDK — for library users. Compose retrievers, stores, rerankers, graph tools, and the streaming ingest API inside your own Python application.

Architecture

Mental model

Think of it as a storage hierarchy for agent memory:

- Context window = RAM. Fast, limited (typically 100K–200K tokens for many agent models; larger windows exist, but usable working context is often much smaller after tools, instructions, MCP output, and context rot — ~45K usable tokens on a 200K window is a realistic working number in long-running agent sessions. Clears on session restart.

- mnemostack corpus = Disk. Persistent, searchable, grows forever — every fact the agent has ever seen, queryable on demand.

recall(query)= page fault handler. When the agent needs something that isn't in the current context, it pulls the exact fact from storage with a single hybrid query — not a grep, not a reload of the whole corpus.

The practical effect: you stop re-explaining your project to the agent after every /compact. You stop losing momentum to the re-orientation tax that shows up in any agent with session compaction. mnemostack solves it at the library level, not tied to any single agent runtime.

How it works, in one paragraph

On each recall(query): the configured retrievers (Vector and Temporal by default, with BM25 and Memgraph when configured) run in parallel and return ranked lists. Reciprocal Rank Fusion merges them. The optional 8-stage pipeline can reweight results using query classification, exact-token rescue, gravity/hub dampening, freshness, inhibition-of-return, curiosity boosts, Q-learning weights supplied through its state store, and graph resurrection. An optional LLM reranker does a final ordering pass. You get a list of RecallResult with source, score, and provenance — ready to hand to a model.

Where mnemostack fits

Most memory tools in the agent ecosystem pick one axis and optimize for it: simple vector similarity for RAG, framework-bound memory tied to a specific agent library, platform-level runtimes with audit and compliance features, or CLI wrappers over a single vendor's session store. Each makes sense for its scope.

mnemostack takes a different slice: it is a recall quality layer, offered as a plain Python package. Four retrievers (Vector + BM25 + Memgraph + Temporal), RRF fusion, an 8-stage pipeline, and an optional LLM reranker — composed to handle mixed workloads on the same corpus: exact-token lookups, semantic queries, temporal questions, and multi-hop reasoning, without forcing you to choose one mode over another.

We are not a replacement for your agent framework and not a full platform runtime. We are the piece that actually finds the right fact in a growing corpus. Drop mnemostack into your own Python agent, or let a higher-level memory service call recall() over a plain function boundary. The retrievers, pipeline, and reranker are individually composable — take only the parts you need.

Design

See ARCHITECTURE.md for detailed design: pipeline stages, Qdrant schema, Memgraph temporal model, consolidation runtime, MCP tools.

Storage, index, and retrieval layers

- Storage/index layer: Qdrant stores vector points and payloads; BM25 indexes exact-token corpora; Memgraph stores temporal graph facts; the Temporal retriever handles time-aware vector recall.

- Fusion layer: Reciprocal Rank Fusion merges ranked lists from Vector, BM25, Memgraph, and Temporal retrievers, with optional static or adaptive weights.

- Recall pipeline: the 8-stage pipeline can classify the query, rescue exact tokens, dampen gravity/hubs, blend freshness, apply inhibition-of-return, add curiosity boosts, use Q-learning state, and resurrect graph-linked memories.

- Feedback loop: HTTP and MCP recall can apply existing state; explicit

/feedbackormnemostack feedbackupdates usefulness signals without silently training on every response. - Inference layer: optional LLM reranking and answer generation sit on top of recall, so retrieval still works when the LLM is unavailable.

Pipeline state

The 8-stage pipeline can use a small state store between calls (Q-learning weights, inhibition-of-return history, per-document gravity/hub counters). FileStateStore(path) persists it to a JSON file. HTTP recall applies existing state and can record inhibition-of-return exposure with --auto-record-ior; Q-learning updates only through explicit /feedback calls. CLI/MCP recall still apply existing state but do not collect feedback automatically. For deterministic benchmarks, call build_full_pipeline(enable_stateful_stages=False) so IoR/Q-learning/curiosity state cannot affect scores. For multi-process servers, implement your own StateStore (three methods: get(), set(), update()) backed by Redis or your database.

Graceful degradation

Any retriever can fail (Memgraph down, Qdrant unreachable, BM25 corpus empty). Recaller logs and continues with the remaining sources. The LLM reranker is wrapped in try/except by convention — if the LLM is rate-limited, the pre-rerank order is returned. This is deliberate: a memory stack that goes dark because one component hiccuped is worse than a slightly degraded one.

Comparison and benchmarks

On LoCoMo, Mnemostack reaches 82.5% strict accuracy in our evaluation setup. The table below includes our baseline runs and externally reported numbers for context. Results depend on dataset version, configuration, judge model, scoring rules, and query type. Treat externally reported numbers as directional unless they were run with the same harness and settings.

Benchmarks

Full LoCoMo runs use the official SNAP-Research dataset (10 samples / 1986 QA) from a clean state. Across the tables below: Strict = exact match, Combined = strict + partial. Counts in cells are correct / total.

Some LoCoMo cat_5 questions have empty ground-truth answers. Under the current scorer, these are counted as correct because there is no expected answer to match. To avoid overstating recall quality, we also report signal-only scores with those questions removed. Signal-only scores are computed on the 1,540 questions with non-empty ground-truth answers.

LoCoMo, current judge (gemini-3-flash-preview)

| Run | Strict (full) | Combined (full) | Strict (signal-only) | Combined (signal-only) |

|---|---|---|---|---|

| Baseline v0.3.0 (Vector + BM25 + 8-stage pipeline) | 76.7% (1524 / 1986) | 88.1% (1750 / 1986) | 70.0% (1078 / 1540) | 84.7% (1304 / 1540) |

Retrieval improvements (window_size=3, query expansion, top-K 25) |

82.5% (1639 / 1986) | 92.2% (1832 / 1986) | 77.5% (1193 / 1540) | 90.0% (1386 / 1540) |

Honest numbers disclaimer.

(full)is the headline aggregate across all 1986 questions, the format vendors typically report — some publish only their strongest sub-category, we publish the full aggregate because it's what actually predicts behavior on mixed workloads.(signal-only)strips thecat_5auto-pass artifact described above, so what you read there is the real recall quality on questions that have a ground-truth answer.

Per-category breakdown (retrieval-improvements run):

| Category | Strict | Combined |

|---|---|---|

cat_1 single-hop lists |

53.9% | 90.1% |

cat_2 temporal |

79.8% | 84.7% |

cat_3 open-domain reasoning |

63.5% | 77.1% |

cat_4 multi-hop reasoning |

86.1% | 93.5% |

cat_5 adversarial open-domain |

100.0% | 100.0% |

Notes:

- Judge model matters:

gemini-3-flash-previewis more accurate than the previous Gemini Flash judge on synonyms, partial matches, and empty ground truth. cat_5questions have empty ground truth in this new run and are auto-scored as correct by the benchmark harness. That makes the newcat_5strict score (446 / 446, 100.0%) useful for aggregate harness accounting, but not directly comparable to the historicalcat_5strict score (89.7%) from the older adversarial-question evaluation.- Pipeline: Vector retrieval with Gemini embeddings + BM25 + RRF + 8-stage reranking pipeline + Gemini Flash reranker.

Historical LoCoMo results (gemini-2.5-flash judge)

| Metric | First full run | mnemostack 0.2.1 |

|---|---|---|

| Strict | 66.4% (1319 / 1986) | 67.8% (1346 / 1986) |

| Partial | 12.8% (254 / 1986) | 12.6% (250 / 1986) |

| Wrong | 20.8% (413 / 1986) | 19.6% (390 / 1986) |

| Combined | 79.2% (1573 / 1986) | 80.4% (1596 / 1986) |

By question category (combined not tracked for the first full run):

| Category | First run Strict | 0.2.1 Strict | 0.2.1 Combined | Δ Strict |

|---|---|---|---|---|

cat_1 single-hop lists |

34.8% | 34.4% | 74.1% | −0.4pp |

cat_2 temporal |

64.5% | 69.8% | 77.9% | +5.3pp |

cat_3 open-domain reasoning |

31.2% | 41.7% | 49.0% | +10.5pp |

cat_4 multi-hop reasoning |

69.2% | 69.6% | 82.0% | +0.4pp |

cat_5 adversarial open-domain |

90.1% | 89.7% | 89.7% | −0.4pp |

Last historical run: 2026-04-27, mnemostack 0.2.1, same dataset, judged by gemini-2.5-flash.

Comparison with reported numbers from other systems

Caveat: different judges, evaluation protocols, and in some cases category cherry-picking. Vendor numbers below are taken at face value from their published material.

| System | LoCoMo correct |

|---|---|

| Hindsight (reported range) | 78–85% |

| Memobase (temporal subset) | 85% |

| mnemostack | 82.5% |

| Letta filesystem agent | 74% |

| Mem0 graph variant | ~68.5% |

| Zep (independently replicated) | 58.4% |

Real-corpus needle benchmark

LoCoMo measures generic long-term dialogue recall. We also run a private needle-in-haystack benchmark on the production workload that drove the original design — a ~17k-point memory stack indexed from a long-running assistant. Queries mix exact tokens (IP addresses, tickers), telegram IDs, paraphrased facts, and temporal probes.

| Metric | Value |

|---|---|

| recall@1 | 90% (9/10) |

| recall@5 | 100% (10/10) |

| recall@10 | 100% (10/10) |

| Query latency p50 | 1.26 s |

| Query latency max | 1.70 s |

Honest numbers disclaimer. Reporting only

recall@5 = 100%would look impressive, but it would also hide the harder top-1 behavior.recall@1 = 90%is what an agent reading only the top hit actually experiences, and the gap between@1and@5is where reranker quality (or the lack of it) shows up. We publish all three so you can read the metric that matches your downstream usage.

Useful because LoCoMo's failure modes (list exhaustion, open-domain reasoning) are orthogonal to what production memory stacks actually spend time on (find the specific fact the user mentioned weeks ago). This benchmark is not in the public repo; its methodology is in benchmarks/synthetic_longhorizon.py, which is the closest reproducible approximation.

Reproduce LoCoMo from a fresh clone

pip install -e '.[dev]'

bash benchmarks/download_locomo.sh # fetches SNAP Research's public dataset

export GEMINI_API_KEY=...

bash benchmarks/run_locomo.sh # full 10-sample run, writes results/ts.{json,log}

Details, category definitions, and notes on the judge protocol: benchmarks/README.md.

Who is this for?

Build it in if you need:

- Long-lived agent memory that survives session restarts and doesn't drift into irrelevance as the corpus grows.

- Recall quality on mixed workloads — exact-token lookups (IDs, tickers, error strings), semantic queries, temporal questions, multi-hop reasoning — not just one of them.

- A stack you can plug into your own infrastructure: bring your own embedding model, LLM, vector store, or graph DB.

Not the best fit if you only need a single call to text-embedding-3-small + cosine similarity — something simpler will do. mnemostack earns its complexity on mixed, long-horizon workloads.

Features

- 🧠 4-source hybrid retrieval — Vector (Qdrant) + BM25 (exact tokens) + Memgraph (knowledge graph) + Temporal (time-aware vector), all fused via Reciprocal Rank Fusion. Pluggable

Retrieverabstraction — add your own sources. - ⚖️ Weighted & adaptive RRF fusion —

reciprocal_rank_fusion(weights=[...])lets you lift sources you trust more;Recaller(adaptive_weights=True)picks a per-query-shape profile (exact-token / person / temporal / general). See the honest write-up below for where this helps and where it doesn't. - 🧪 HyDE retriever (opt-in) — embeds a hypothetical answer instead of the query. Useful for query↔document vocabulary gaps in documentation-style corpora; does not reliably help on dialogue-backed memory and always costs one extra LLM roundtrip per

search(). Not included in the defaultRecaller. - 🪜 8-stage recall pipeline — ClassifyQuery → ExactTokenRescue → GravityDampen → HubDampen → FreshnessBlend → InhibitionOfReturn → CuriosityBoost → QLearningReranker. Opt-in; stateful HTTP feedback is explicit via

/feedback, and recall exposure logging is off unless--auto-record-ioris enabled. - 🔁 LLM reranker — Gemini Flash (or any LLM) reorders top-K by relevance; catches cases where embedding similarity alone is too broad.

- ⚡ Async-friendly —

Recaller.recall_asyncdispatches retrievers in parallel; five concurrent HTTP recalls finish in roughly one single-recall wall-clock. - 🌍 Unicode-aware entity resolution — Memgraph retriever probes by

telegram_id, handle, and precomputedname_lowerso non-ASCII names match correctly (Memgraph'stoLower()lower-cases ASCII only). - 📥 Streaming

IngestorAPI — batched, idempotent, LRU-cached ingest from any Python code. Lazy iterator means large corpora ingest with bounded memory. Same(source, offset, text)→ same deterministic UUID-shaped content id, so re-runs are no-ops. - 🌐 HTTP API (optional) —

pip install 'mnemostack[server]'gives you/recall,/answer,/health,/docs, plus/metricsin Prometheus text format. See the HTTP server section below. - 🔌 Pluggable embeddings — Gemini, Ollama, or HuggingFace (local GPU), via provider registry

- 🤖 Pluggable LLM — Gemini Flash / Ollama for answer generation and reranking

- 📚 Temporal knowledge graph — facts have

valid_from/valid_until, query point-in-time state; graph resurrection stage recovers evicted-but-relevant memories. - 💬 Answer mode — inference layer synthesizes concise factual answers with source citations and confidence. Category-aware prompts (lists / temporal / multi-hop / inference / adversarial), specificity resolver, and

cat_3inference retry with query decomposition are on by default. - 📋 Knowledge synthesis —

synthesize(entity)rolls up everything memory knows about a person, project, or topic into a structured profile (SynthesisFact/SynthesisResult, markdown or JSON). CLI:mnemostack synthesize <entity>. Optional related-entities expansion via graph and LLM summarization pass. - 📏 Progressive Tiers API —

search --tier {1,2,3}andanswer --tier {1,2,3}bound output size (~50 / ~200 / ~500 tokens) so agents can pay only for the detail they actually need. Omit--tierfor unchanged full output. - ✂️ Chunkers + sliding window — plain, fixed-size, and

MessagePairChunkerfor chat transcripts (keeps user↔assistant pairs together). The newvector.window_sizeconfig carries adjacent-turn context inside each chunk;window_size=3was worth +5.8pp strict / +4.1pp combined on LoCoMo (v0.4.0). - 🔎 Query expansion + smart retry —

Recaller(expansion_llm=...)widens recall with reformulated queries;AnswerGenerator(retry_with_expansion=True)retries low-confidence answers with the expanded query and a HyDE-style hypothetical before giving up. Opt-in via--query-expansiononmnemostack answer. - ⚙ Consolidation runtime — phase orchestrator for nightly memory lifecycle

- 🔌 MCP server — expose memory tools to Claude Desktop, ChatGPT, Cursor, etc.

- 🛡 Graceful degradation — retrieval keeps working if graph or any retriever is down

Recall tuning: fusion weights & HyDE

Some of the newer knobs help in specific workloads and do nothing (or mildly hurt) in others. Measured, not promised — both are opt-in by design, and the default Recaller stays classical equal-weight RRF over Vector + BM25 (+ Memgraph + Temporal when supplied).

Recaller(adaptive_weights=True) — picks a weight profile per query shape:

| Query shape | Detection | Profile (bm25 / memgraph / vector / temporal) |

|---|---|---|

exact_token |

IPv4 / port / version / UUID / API-style tokens | 1.4 / 1.4 / 1.0 / 0.9 |

person |

"who is", @handle, username, contact, etc. |

1.0 / 1.5 / 1.0 / 0.9 |

temporal |

"when", "yesterday", "today", dates | 1.0 / 1.0 / 1.0 / 1.4 |

general |

everything else | classical equal-weight RRF |

Measured on a real production corpus with 10 needle probes: recall@1 went 50% → 60%, recall@5 stayed at 90% (zero regression). On LoCoMo (pure dialogue questions, all classified general), adaptive weights had no effect — the profile simply isn't triggered. Rule of thumb: turn it on for production ops-style workloads (IPs, tickers, IDs, named entities); leave it off, or don't expect a lift, for dialogue benchmarks. Static retriever_weights={...} always wins over adaptive when both are set.

HyDERetriever — generates a short hypothetical answer via your LLM and embeds that instead of the raw query, then fuses alongside the other retrievers. Useful when the question and the stored answer use very different vocabulary (documentation corpora, FAQ-style content). On our LoCoMo cat_3 smoke (conv-43, 14 open-domain reasoning questions) it moved accuracy from 14.3% to 21.4% (+1 correct answer); on dialogue-backed memory overall it's roughly a wash. It always costs one extra LLM call per search(), so budget accordingly and treat it as a tool for specific workloads rather than a default.

Utilities

Agent runtimes often wrap transcript messages in metadata envelopes before the real body, which can dominate embeddings and make unrelated turns look similar. Clean messages before chunking/indexing with strip_metadata_blocks():

from mnemostack.utils import strip_metadata_blocks

clean = strip_metadata_blocks(raw_message)

Built-in profiles cover OpenClaw webchat and Telegram envelopes; pass profiles= or extra_patterns= to tune the cleanup for your runtime.

Environment

| Variable | Purpose | Required for |

|---|---|---|

GEMINI_API_KEY |

Google Generative AI key | Gemini embedding + Gemini Flash LLM |

OLLAMA_HOST |

Ollama server URL (default http://localhost:11434) |

Ollama embeddings / LLM |

MNEMOSTACK_COLLECTION |

Qdrant collection name (default mnemostack) |

CLI convenience |

MNEMOSTACK_QDRANT_URL |

Qdrant URL (default http://localhost:6333) |

Remote Qdrant |

MNEMOSTACK_GRAPH_URI / MNEMOSTACK_MEMGRAPH_URI |

Memgraph bolt URI | Graph retriever / GraphStore |

MNEMOSTACK_PROVIDER / MNEMOSTACK_EMBEDDING_PROVIDER |

Embedding provider | CLI / HTTP / MCP |

MNEMOSTACK_LLM / MNEMOSTACK_LLM_PROVIDER |

LLM provider | Answer generation / reranking |

MNEMOSTACK_BM25_PATHS |

BM25 corpus paths separated by os.pathsep (: on Unix) |

CLI / HTTP / MCP BM25 retriever |

MNEMOSTACK_AUTO_RECORD_IOR |

true/false toggle for HTTP recall exposure logging |

HTTP stateful pipeline |

Only the providers you actually use need their keys. HuggingFace local-GPU embeddings need no keys at all. mnemostack init writes the same settings as YAML; explicit CLI flags override config/env defaults.

Setup and usage details

Try it in 30 seconds (Docker)

Fastest way to kick the tyres. No Python install, no manual Qdrant / Memgraph setup.

git clone https://github.com/udjin-labs/mnemostack && cd mnemostack

cp README.md examples/notes/ # any markdown will do

GEMINI_API_KEY=your-key docker compose -f examples/docker-compose.yml up -d --build

# Index the notes volume and ask a question over HTTP

docker compose -f examples/docker-compose.yml exec mnemostack \

mnemostack index /data --provider gemini --collection demo

curl -s http://localhost:8000/recall \

-H 'content-type: application/json' \

-d '{"query":"what is this about","limit":5}' | jq

The mnemostack container runs the HTTP API on port 8000 by default. Interactive docs are at http://localhost:8000/docs. Use docker compose exec mnemostack mnemostack <cmd> for CLI-style operations (index, search, health) against the same stack.

Tear down with docker compose -f examples/docker-compose.yml down -v (the -v wipes Qdrant + Memgraph state).

Prefer Ollama (no cloud key needed)? Run Ollama on the host, set OLLAMA_HOST=http://host.docker.internal:11434, and pass --provider ollama everywhere instead of gemini.

Installation

# From PyPI

pip install mnemostack

# Optional extras

pip install 'mnemostack[huggingface]' # local GPU embeddings

pip install 'mnemostack[mcp]' # MCP server

pip install 'mnemostack[dev]' # tests + linters

Run a local Qdrant for the vector store:

docker run -p 6333:6333 qdrant/qdrant:latest

Optionally a Memgraph for the knowledge graph:

docker run -p 7687:7687 memgraph/memgraph:latest

CLI quick start

# Health check

mnemostack health --provider ollama

# Index a directory of notes

mnemostack index ./my-notes/ --provider gemini --collection my-memory --recreate

# Hybrid recall

mnemostack search "what did we decide about auth" --provider gemini --collection my-memory

# Synthesize answer

mnemostack answer "what is the capital of France" --provider gemini --collection my-memory

# Record explicit feedback into the same state file used by the HTTP/MCP pipeline

mnemostack feedback <hit-id> --signal clicked --query "what did we decide about auth" \

--source-list vector --source-list bm25

# MCP server (for Claude Desktop, Cursor, etc.)

mnemostack mcp-serve --provider gemini --collection my-memory

Progressive tiers — pay only for the detail you need

search and answer accept an optional --tier {1,2,3} flag that bounds how

much output a call produces. Useful when a recall is called from a long-running

agent loop where full recall output would burn context unnecessarily.

# Tier 1 (~50 tokens) — just "is there anything in memory about this?"

# Returns id, score, source labels; no text.

mnemostack search "VPN failover" --tier 1 --provider gemini

# Tier 2 (~200 tokens) — triage with short snippets (~40 chars each)

mnemostack search "VPN failover" --tier 2 --provider gemini

# Tier 3 (~500 tokens) — full 200-char previews, up to 10 results

mnemostack search "VPN failover" --tier 3 --provider gemini

Omit --tier to get the full, uncapped output (backward compatible). Rule of

thumb for agents: tier 1 for navigation / existence checks, tier 3 only when

you actually need to read the memories. answer is already compressed, so it

needs a tier less often — use --tier 1 there to drop the SOURCES: block

when only the answer text is wanted.

Streaming ingest API

When you want to feed items into mnemostack from code — a chatbot that logs every message, a scraper, a daemon tailing a log — use the Ingestor. It handles batching, deduplication, and idempotency for you.

from mnemostack.embeddings import get_provider

from mnemostack.vector import VectorStore

from mnemostack import Ingestor, IngestItem

emb = get_provider("gemini")

store = VectorStore(collection="my-memory", dimension=emb.dimension)

store.ensure_collection()

ing = Ingestor(embedding=emb, vector_store=store, batch_size=64)

stats = ing.ingest([

IngestItem(text="alice joined acme on 2024-03-01", source="notes/alice.md"),

IngestItem(text="alice left acme on 2025-06-15", source="notes/alice.md", offset=100),

])

print(stats) # IngestStats(seen=2, embedded=2, upserted=2, skipped=0, failed=0)

Guarantees:

- Idempotent. Each item gets a deterministic UUID-shaped content id computed from

(source, offset, text). Re-running with the same input is a no-op: Qdrant upsert replaces the point onto itself, and an in-process LRU cache skips even the embedding call for items already seen in this session. - Batched. Items are embedded in batches of

batch_size, so provider HTTP overhead amortises across many items. - Streaming-friendly.

ing.stream(item_iter)yields per-batch stats so long feeds can be monitored without waiting for the whole stream to drain. - Graceful. If a single item fails to embed, it is counted as

failedbut the rest of the batch still lands.

# Long-lived feed (e.g. inside a FastAPI or Celery worker)

for item in your_firehose():

ing.ingest_one(IngestItem(text=item.body, source=item.channel, metadata={

"user_id": item.user_id,

"ts": item.ts.isoformat(),

}))

Python API

from mnemostack.embeddings import get_provider

from mnemostack.vector import VectorStore

from mnemostack.recall import Recaller, AnswerGenerator

from mnemostack.llm import get_llm

emb = get_provider("gemini")

store = VectorStore(collection="my-memory", dimension=emb.dimension)

store.ensure_collection()

# ... index data here ...

recaller = Recaller(embedding_provider=emb, vector_store=store)

results = recaller.recall("what did we decide", limit=10)

# Each result: .id .text .score .source ("vector" | "bm25" | "memgraph" | "temporal") .metadata

# Optional: synthesize a concise answer

gen = AnswerGenerator(llm=get_llm("gemini"))

answer = gen.generate("what did we decide", results)

print(answer.text, answer.confidence, answer.sources)

Full stack: 4-source retrieval + 8-stage pipeline + reranker

This is the baseline configuration used for the LoCoMo numbers above; the retrieval-improvements run adds window_size=3, query expansion, and top-K 25.

from mnemostack.embeddings import get_provider

from mnemostack.llm import get_llm

from mnemostack.vector import VectorStore

from mnemostack.recall import (

Recaller, Reranker,

VectorRetriever, BM25Retriever,

MemgraphRetriever, TemporalRetriever,

build_full_pipeline,

)

from mnemostack.recall.pipeline import FileStateStore

emb = get_provider("gemini")

store = VectorStore(collection="my-memory", dimension=emb.dimension)

retrievers = [

VectorRetriever(embedding=emb, vector_store=store),

BM25Retriever(docs=bm25_docs), # see "Building a BM25 corpus" below

MemgraphRetriever(uri="bolt://localhost:7687"), # optional

TemporalRetriever(embedding=emb, vector_store=store),

]

recaller = Recaller(retrievers=retrievers)

raw = recaller.recall("what did we decide", limit=30)

pipeline = build_full_pipeline(state_store=FileStateStore("/tmp/mnemo-state.json"))

reranked = pipeline.apply("what did we decide", raw)

reranker = Reranker(llm=get_llm("gemini"), max_items=20)

final = reranker.rerank("what did we decide", reranked)[:10]

Building a BM25 corpus

BM25Retriever needs a list of BM25Doc. Each doc is the atomic unit BM25 will rank — typically a paragraph or chunk of one of your source files:

from mnemostack.recall import BM25Doc

from pathlib import Path

docs = []

for i, path in enumerate(Path("my-notes/").rglob("*.md")):

text = path.read_text()

# chunk however you like — here: 800-char windows

for j in range(0, len(text), 800):

chunk = text[j : j + 800]

if chunk.strip():

docs.append(BM25Doc(

id=f"{path.name}:{j}",

text=chunk,

payload={"source": str(path), "offset": j},

))

For transcript-like inputs (user↔assistant messages), prefer MessagePairChunker so a question and its answer stay in the same chunk. See mnemostack.chunking.

If your canonical memory corpus is already stored in Qdrant payloads, build the BM25 corpus from the same collection instead of maintaining a separate markdown export. This keeps exact-token lookup aligned with vector search (message IDs, commit hashes, filenames, quoted phrases):

from qdrant_client import QdrantClient

from qdrant_client.models import FieldCondition, Filter, MatchValue

from mnemostack.recall import BM25Retriever

client = QdrantClient(host="localhost", port=6333)

bm25 = BM25Retriever.from_qdrant(

client,

"memory",

scroll_filter=Filter(

must=[FieldCondition(key="chunk_type", match=MatchValue(value="transcript"))]

),

limit=40_000,

)

hits = bm25.search("MERGED 71706", limit=5)

You can also call bm25_docs_from_qdrant(...) directly if you want to combine Qdrant payload chunks with local BM25Docs before constructing BM25Retriever.

HTTP server (optional)

If you want mnemostack available to callers that aren't Python — any service written in Node, Go, Rust, or a plain curl from a shell script — install the server extra and expose it over HTTP:

pip install 'mnemostack[server]'

export GEMINI_API_KEY=...

mnemostack serve --provider gemini --collection memory --port 8000

mnemostack serve binds to 127.0.0.1 by default. Use

--host 0.0.0.0 only behind your own auth/rate-limit layer.

Endpoints:

| Method | Path | Purpose |

|---|---|---|

GET |

/health |

Qdrant + Memgraph reachability + config summary |

POST |

/recall |

Hybrid recall with optional 8-stage pipeline |

POST |

/answer |

Recall + LLM answer synthesis with citations |

POST |

/feedback |

Explicit click/usefulness feedback for stateful learning |

GET |

/metrics |

Prometheus scrape endpoint (counters + summary histograms) |

GET |

/docs |

Interactive OpenAPI UI |

curl -s http://localhost:8000/recall \

-H 'content-type: application/json' \

-d '{"query": "what did we decide about auth", "limit": 10}' | jq

Response shape (abridged):

{

"query": "what did we decide about auth",

"results": [

{ "id": "...", "text": "...", "score": 0.72, "source": "notes/...md", "metadata": {} }

]

}

The /answer endpoint adds { answer, confidence, sources } alongside the memories. If the LLM isn't configured, /answer returns 503 and /recall still works — graceful degradation applies at the HTTP layer too.

Stateful learning is explicit. Start the server with --auto-record-ior if you want /recall and /answer responses to update inhibition-of-return state. Send user actions to /feedback to update Q-learning:

curl -s http://localhost:8000/feedback \

-H 'content-type: application/json' \

-d '{"hit_id":"...","signal":"clicked","query":"what did we decide about auth","sources":["vector","bm25"]}' | jq

signal is one of useful, clicked, or irrelevant; pass the retrievers list returned by /recall as sources so Q-learning can update the right source weights.

The same state update is available from CLI as mnemostack feedback ... and from MCP as mnemostack_feedback.

For production, front this with whichever reverse proxy you already use (nginx, Caddy, Traefik) and set an auth layer — mnemostack's server does not do auth itself on purpose; the goal is to plug into whatever you already have.

Knowledge graph (optional)

from mnemostack.graph import GraphStore

graph = GraphStore(uri="bolt://localhost:7687")

graph.add_triple("alice", "works_on", "project-x", valid_from="2024-01-01")

graph.add_triple("alice", "works_on", "project-y", valid_from="2024-07-01")

# Who was alice working on in March?

march_facts = graph.query_triples(subject="alice", as_of="2024-03-15")

Current graph facts use the explicit valid_until="current" marker. If you

created graph data with an older release, run

mnemostack graph-migrate-current --dry-run first, then

mnemostack graph-migrate-current to backfill legacy NULL markers.

MCP server for Claude Desktop

Add the entry below to your Claude Desktop config file:

- macOS:

~/Library/Application Support/Claude/claude_desktop_config.json - Windows:

%APPDATA%\Claude\claude_desktop_config.json - Linux:

~/.config/Claude/claude_desktop_config.json

{

"mcpServers": {

"mnemostack": {

"command": "mnemostack",

"args": ["mcp-serve", "--provider", "gemini", "--collection", "my-memory"],

"env": {

"GEMINI_API_KEY": "your-key-here"

}

}

}

}

Claude will then be able to call mnemostack_search, mnemostack_answer, and graph tools.

Custom embedding provider

from mnemostack.embeddings import EmbeddingProvider, register_provider

class MyProvider(EmbeddingProvider):

@property

def name(self): return "my-provider"

@property

def dimension(self): return 512

def embed(self, text): ...

def embed_batch(self, texts): ...

register_provider("my-provider", MyProvider)

Install for an OpenClaw-style agent

If you run an OpenClaw-style assistant with its own scripts/recall.sh or similar recall entry point, you don't have to wire mnemostack in by hand. Ask the assistant to do it and it can follow the steps below verbatim.

(These steps are written against the markdown-backed memory layout popularised by OpenClaw / OpenClaw — the reasoning applies to any assistant whose memory lives in MEMORY.md + daily notes.)

Assistant, read this section and follow the steps if your user asks you to install mnemostack.

Install the package into whichever Python env your recall scripts use.

pip install mnemostackConfirm with

python3 -c "import mnemostack; print(mnemostack.__version__)".Make sure Qdrant and (optionally) Memgraph are reachable. If either is missing, start them:

docker run -d -p 6333:6333 --name qdrant qdrant/qdrant:latest docker run -d -p 7687:7687 --name memgraph memgraph/memgraph:latest # optionalCheck with

curl -sf http://localhost:6333/collectionsandecho 'RETURN 1;' | cypher-shell -a bolt://localhost:7687.Populate the vector store with the assistant's existing memory. Almost every OpenClaw-style assistant stores memory as markdown files —

MEMORY.md,memory/*.mddaily notes, maybeUSER.md/TOOLS.md. Those files are the ground truth; Qdrant is just a searchable index on top of them. So "migration" here really means a first-time ingest.Two cases:

Case A — user has no Qdrant yet (default OpenClaw setup, markdown-only memory).

Stand up Qdrant (see step 2), then index the workspace in one command:

mnemostack index ~/.openclaw/workspace/ \ --provider gemini \ --collection memory \ --chunk-size 800This walks every

*.md/*.txtunder the workspace, chunks them, embeds each chunk with the chosen provider, and upserts into Qdrant. For a typical workspace (a few megabytes of notes) this finishes in a minute or two and stays well inside free-tier embedding quotas.Re-running is safe.

mnemostack indexuses a deterministic id per(source, offset, content)chunk, so a second run skips unchanged chunks (no duplicates, no wasted embedding calls) and only re-embeds chunks whose content changed or moved. Use--recreateif you deliberately want to wipe and rebuild from scratch.Memgraph is optional — skip it for a pure markdown-backed memory and

MemgraphRetrieverjust won't be part of the retriever list.Case B — user already has Qdrant / Memgraph populated by another plugin or a prior assistant run. Don't re-index. mnemostack reads existing collections and graphs as-is; payload like

chat_id,memory_class,valid_fromis preserved. Just pointVectorStore(collection=...)at the existing collection name andMemgraphRetriever(uri=...)at the existing graph.Critical for both cases: use the same embedding model for the query that was used for the stored points. Mixing providers/models across ingest and query silently returns garbage — no error, just bad results.

Point your recall entry at mnemostack. Swap your search helper to use the 4-retriever

Recallerfrom the "Full stack" example above. If your recall script has a--unifiedflag, route to mnemostack first and fall back to the legacy code path on any exception.Keep the fallback wired. Don't delete the existing recall implementation. Route mnemostack as the primary, fall back to the old recall on any exception. A bad upgrade becomes a one-minute rollback, not an outage.

Smoke-test on real queries from the user's memory, not toy strings. Ask at least one exact-token query (an IP, a ticker, an error string), one semantic query, one temporal query ("what did we decide last March"), and one list query. If any regresses vs the old recall, keep both running in parallel until you understand why.

Write a short note in the user's daily memory describing what changed: which command was used to ingest, which embedding provider, which collection name, and where the fallback lives. Future sessions need this to avoid re-indexing with a different model and trashing recall quality.

If you're a human operator and you'd rather do this yourself, the same steps apply; treat them as a checklist.

OpenClaw auto-recall companion plugin

This repository also includes a self-contained OpenClaw companion plugin in openclaw-plugin/. mnemostack remains the recall backend — vector + BM25 + graph + temporal retrieval, fusion, reranking, and answer synthesis — while the plugin connects that backend to OpenClaw's before_prompt_build hook.

Zero-config path: install mnemostack, run the daemon on the default local port, install/enable the plugin, and OpenClaw will automatically inject bounded recall answers for recall-style questions:

mnemostack serve --host 127.0.0.1 --port 18793

cd openclaw-plugin

npm test

The plugin defaults to http://127.0.0.1:18793/answer, supports English/Russian trigger defaults with extensible language-agnostic trigger lists, and can fall back to a Script backend such as recall-selfeval.sh when you are not running the daemon.

Roadmap

- Embedding provider registry (Gemini / Ollama / HuggingFace)

- LLM provider registry (Gemini Flash / Ollama)

- Qdrant wrapper

- BM25 + RRF recall pipeline

- Answer mode with confidence + citations

- LLM-based reranker

- Memgraph wrapper with temporal validity

- Consolidation runtime (phase orchestrator)

- CLI (

mnemostack health/search/answer/index/mcp-serve) - MCP server (Model Context Protocol)

- Text → graph triple extractor helpers (

mnemostack.graph.TripleExtractor) - Config file support YAML/JSON (

mnemostack.config,mnemostack init/configCLI) - Async variants for high-throughput servers (

mnemostack.vector.AsyncQdrantStore) - Docker compose examples (

examples/docker-compose.yml) - Reproducible LoCoMo benchmark harness in-tree (

benchmarks/run_locomo.sh) - First-class FastAPI/Starlette service wrapper (

pip install 'mnemostack[server]',mnemostack serve) - Async

Recaller.recall_asyncand parallel retriever dispatch (proven: 5 concurrent HTTP recalls complete in ~1x single-request wall-clock) - Benchmarks on longer-horizon synthetic corpora (

benchmarks/synthetic_longhorizon.py) - Streaming

IngestorAPI (mnemostack.ingest) - Prometheus

/metricsendpoint on the HTTP server - Unicode-aware

MemgraphRetrieverprobes (telegram_id, handle,name_lower) - Community health: Code of Conduct, Security policy, issue/PR templates

- Per-retriever latency in

/metrics(mnemostack_recall_<name>_latency_ms) - Weighted RRF fusion (

reciprocal_rank_fusion(weights=[...])) - Adaptive per-query-shape weights in

Recaller(adaptive_weights=True) -

HyDERetriever(opt-in, not in defaultRecaller) - Two-pass graph extraction (full + detail) in the agent's graph-sync pipeline

- Progressive Tiers API on

search/answer(--tier {1,2,3}, backward-compatible) - MCP integration guides for Claude Desktop/Code, Cursor, OpenClaw (

integrations/) - Silent-zero fix in

TemporalRetriever+ dispatch-by-type filter builder (0.2.0a1)

Contributing

Issues and PRs are welcome. Public APIs are intended to remain stable; new functionality should land additively where possible.

License

Apache 2.0 — see LICENSE.

How to install

Run in your terminal:

claude mcp add mnemostack -- npx